Note: I have re-written this post in response to comments from biostatistician Thomas Lumley below.

It made headlines around the world: Facebook ‘likes’ can reveal users’ politics, sexual orientation, IQ. According to Michal Kosinski, the lead researcher, information on "gender, race, political views, religion, sexual orientation, personality, IQ and so on," can be extracted from the knowledge that a person likes Lady Gaga or Harley-Davidsons.

The study noted that many of the most predictive "likes" weren't obvious ones. For example, fewer than five per cent of users labelled as "gay" were connected with gay groups such as the "No H8 campaign." Instead, likes such as "Britney Spears" and "Desperate Housewives" were "moderately indicative of being gay."

Meanwhile, the "likes" most correlated with high intelligence were thunderstorms, The Colbert Report, science and curly fries…

How accurate were they? According to one report:

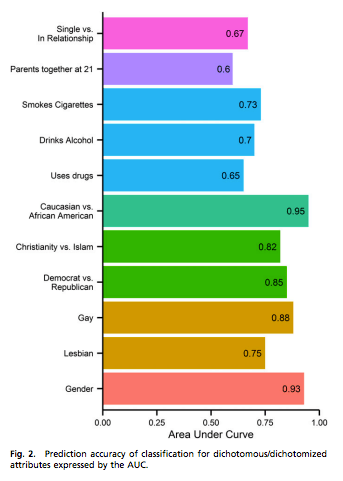

…researchers could tell Democrats and Republicans apart in 85% of the cases; black and white people apart in 95% of the cases; and homosexual and heterosexual men apart in 88% of the cases.

That sounds impresive, doesn't it? But just how accurate were the author's predictions?

The chart on the right is taken from the original article. Seventy-five to 88 percent accuracy for sexual orientation sounds pretty impressive. But what does that actually mean?

The authors coded people in their sample as "lesbian" if they were female, and chose "women" in response to Facebook's "interested in" question. Gay men were identified in a parallel way. Using this methodology, 4.3 percent of males in the sample were categorized as gay by the authors, while 2.4 percent of females were lesbian.

Because the number of people identified in the sample as gay or lesbian was so low, the simple prediction rule "not gay or lesbian" is highly accurate. "Not lesbian" correctly predicts the sexual orientation of 97.6 percent of women in the authors' sample. Not gay predictly corrects the sexual orientation of 94.7 percent of the men.

How much better were the authors able to do than this? The "accuracy" number reported in the chart above, and picked up by the media, is an Area Under the Curve (AUC) statistic. This measures "the probability that a classifier will rank a randomly chosen positive instance higher than a randomly chosen negative one." It's calculated in the same way as a Gini coefficient. A score close to 1 – which means the ability to tell positives from negatives – is a good thing.

Thomas Lumley, in the comments below, does some simulations based on the AUC numbers given in the paper to figure out how often the authors would be expected to predict a man's sexual orientation correctly:

With 5% gay, using a prediction threshold of 0.5 in the logistic regression model, I can get a total error rate of 4.8%, with about 1% of people predicted to be gay. That's made up of about 0.45% of the population falsely predicted to be gay, and just under 4.4% falsely predicted to be straight. It's not a terribly impressive improvement over chance, but it is an improvement (and I chose the threshold in a separate sample from the one I used to estimate the accuracy).

So, in a sample of 1000 people, 50 of whom are gay, the authors would identify 10 people whom they predict to be gay. Of these, about 4 actually would be straight, and the rest would be gay. An overwhelming majority – 88 percent – of gay people are not identified using this prediction method, and about 40 percent of those identified as gay are actually straight. It's better than one would do trying to guess who is gay by saying eeny-meeny-miny-moe. However the total error rate is almost as high as one would obtain using a simple "everyone is straight" decision rule.

By changing the prediction threshold, the authors could identify more potentially gay people, but a higher percentage of those would be straight, and the error rate would increase.

The presumption in medicine research is that false negatives are much more costly than false positives – it's much better to take out a healthy appendix or two than to have a patient's appendix rupture. Yet that is not necessarily true in advertising. I get irritated when the New York Times' "recommended for you list" includes every article on same-sex anything, or a list of hot blonde/lusty firefighter "singles in your area" appears on the right of my screen. In advertising the total error rate matters, and false positives may be as costly as false negatives.

As an aside, the accuracy of identifying sexuality from the "interested in" Facebook option is questionable. I took a look at the pages of my own gay and lesbian Facebook friends, and asked a friend's son to do the same. Not a single one of these GLBT friends – whether out or closeted – revealed on their profile that they were interested in people of the same gender.

The same issues – having an error rate not much better than one would obtain with a simple decision rule – arise with many of the other characteristics analyzed in the paper. 90 percent of the sample were Christian. By always guessing "Christian" one would be correct nine times out of ten. Only 21 percent of the sample takes drugs. By always guessing "no drugs", one would have a 79 percent success rate.

It is true that the authors did much better than a simple decision rule when predicting a person's gender. They had a prediction accuracy of 93 percent on gender, whereas 60 percent of their sample were female. They also did relatively well on guessing whether someone was Caucasian or African American. But I don't think "scientists are able to guess people's gender accurately based on their Facebook likes" would have gathered many headlines – it's not that challenging to do.

The moral of the story is that it's sensible to be at least somewhat Bayesian, and to take all available information into account when making predictions.

There are two types of people:

1) Bayesians

2) People who don’t yet know that they’re Bayesians

Frances,

While I agree in general, and almost wrote about this paper on StatsChat, I think the ‘95% accuracy’ is not as bad as that. They are using it to describe the area under the Receiver Operating Characteristic curve. Guessing without information other than sample prevalences would give an AUC of 0.5. An AUC of 0.95 is pretty good: if you had one person from each group, the score underlying the classifier would be higher for the gay person 95% of the time.

Since I’m not on Facebook I couldn’t get information about how reliable their sexual orientation data were — I’m not surprised it’s bad.

Even after closing the member loophole, PNAS publishes some truly unimpressive data analysis — did you see the great ape midlife crisis, last year, when the researchers seem to have mistaken a nonlinear decline for a U shape?

Sorry, I misread the graph, but AUC of 0.65 for sexual orientation is still not bad, if the data had been right.

Thomas – thanks for commenting. I’d be interested in hearing more on your take on the paper.

In their paper, they say “Fig. 2 shows the prediction accuracy of dichotomous variables expressed in terms of the area under the receiver-operating characteristic curve (AUC), which is equivalent to the probability of correctly classifying two randomly

selected users one from each class (e.g., male and female)”

What i want to know is the probability is correctly classifying a straight person as straight, and a gay or lesbian person as gay or lesbian. Do you know if it’s possible to infer those numbers from the info that’s given in the paper?

The vast majority of my statistical work is frequentist but one must be very careful to choose ones words. The fair, non-Bayseian way to do this is to report the number of gay people you identify as gay and straight and the same for the straight people. Without those four quadrants this sort of analysis is useless.

Or use Bayes theorem. Of course, the last time I used Bayes theorem for this purpose my prior was way wrong 😉

Point of clarification (sorry if I’m being dense). In describing the results for dichotomous variables the original paper says AUC “is equivalent to the probability of correctly classifying two randomly selected users one from each class”. I take this to mean that, for example, in the case of sexual orientation:

Prob(Model correctly identifies sexual orientation|Subject is straight) X Prob(Model correctly identifies sexual orientation|Subject is gay) = 0.88.

Is this correct?

That description of AUC from the paper is incomplete; it confuses the prediction score and the prediction. I don’t think they actually did any predictions.

The correct AUC characterisation in terms of random pairs is: Suppose the predictions are generated by thresholding a numeric score Y. The AUC is the probability that a randomly selected gay person has a higher score than a randomly selected straight person. That’s not the same as the prediction being correct (unless, for example, the numeric score is binary) even in this paired setting.

If the prediction score was normal in each group with unit standard deviation, an AUC of 0.88 would put the means about 1.6 units apart. If the proportion of gays is 2% a little simulation with a sample of 1000 suggests that you might get positive predictive value of 15% with a negative predictive value of 1%. That is, if a guy is predicted to be gay, the probability is maybe 1 in 7 that he is, if he is predicted to be straight, the probability he is gay is about 1 in 100. You can reduce one error rate by increasing the other, and obviously these are for ‘gay’ as defined by the researchers.

It’s misleading to call this 88% accuracy without further explanation, but it is also a lot better than you could do by chance.

The other thing I was worried about was out-of-sample vs in-sample accuracy. They did do cross-validation, so this is probably ok, but I would have liked to see actual out of sample predictive accuracy.

Giovanni – that’s what it sounds like given what they say in the paper, but I don’t think that’s right – see Thomas’s comments.

This might be useful: you use Stata, right? This is what I did (except I used R and 2% rather than 5% as the population prevalence)

set obs 10000

gen gay= _n <=50

drawnorm predscore

replace predscore = predscore+1.6 if gay==1

logistic gay predscore

lroc

predict predp

gen prediction=.

replace prediction=predp>0.1

table prediction gay

replace prediction=predp>0.05

table prediction gay

Thomas: “That is, if a guy is predicted to be gay, the probability is maybe 1 in 7 that he is, if he is predicted to be straight, the probability he is gay is about 1 in 100.”

But what % of the sample are they predicting to be gay? You say this is a lot better than you can do by chance, and this would be important if the test was, say, to figure out risk of cancer, when people really wanted to know.

Otherwise, if they’re predicting that say 10% of the sample is gay, 6/7 of that 10% will actually be straight, which is more errors than one would get if one guessed that everyone was straight.

Thomas,

Ah…now I understand. Thanks.

Hmm. That one may not have worked — 1000 people seems to be too discrete to get any improvement with 2%. You’re right that for total unweighted error rate you need a positive predictive value of at least 0.5. I’m more used to weighted error rates when false positives and false negatives aren’t equal.

Ok, let’s try again.

With 5% gay, using a prediction threshold of 0.5 in the logistic regression model, I can get a total error rate of 4.8%, with about 1% of people predicted to be gay. That’s made up of about 0.45% of the population falsely predicted to be gay, and just under 4.4% falsely predicted to be straight. It’s not a terribly impressive improvement over chance, but it is an improvement (and I chose the threshold in a separate sample from the one I used to estimate the accuracy).

Under the Normal model, if the AUC is above 0.5 and the population is large enough to be regarded as continuous you can always predict with lower total error rate than not using the data, though not necessarily so as you’d notice. With other shapes of ROC curve and small proportions you might not be able to improve at all on the total error rate, and then the prediction would only be useful in situations where false negatives were more important than false positives, so that a weighted error rate made sense.

The Stata code lets you experiment with cutoffs and see what’s happening.

A pretty interesting blog / article about what information credit card companies together with retailers can and do obtain, and how they use it:

Most people here avoid paying with credit card.

In former times,

publishing a piece in PNAS, Nature, Science, was seen here as “Wow, you have truly arrived on the global scale”.

Today,

having read repeatedly pretty low quality stuff there, I asked some folks, I know, who published there, and the open cynicism was impressive.

genauer – the article you link to in your first comment is a great example of the fact that in advertising even true positives can be costly – people don’t like retailers knowing too much about them.

The article you linked to is taken from a much longer piece in the NY Times. There they describe how people started getting seriously disturbed by these super-targeted ads – that Target knew they were pregnant before their friends and family did. So now what Target does is send people who are pregnant something that looks just like a regular advertising flyer, but has a big spread on baby stuff right in the middle – that way people are less aware that they are being targeted.

to provide some “thick description” (maybe interesting with the recent “culture” theme here”),

I came to chris blattman via the Financial Times AlphaVille

the famous Nando Xmas advertising, and the link via Chris “a very dictator Christmas”, also linked at the bottom of :

It made me actually to believe again, somewhat, that there are a few good (wo)men left in NY academics and development aid.

I heard Kosinski interviewed on CBC. He is a good salesman. Here is the address of the interview:

http://www.cbc.ca/thecurrent/episode/2013/03/13/how-likes-on-facebook-builds-a-profile-of-your-identity-without-consent/

http://www.nature.com/srep/2013/130325/srep01376/full/srep01376.html#auth-1

provides some more information , how , at first seemingly weakly correlated data are used for purpose, those who provide it , do not really like, to put it mildly.

I have some recent local detailed experience, but I dont want to annoy people with “german” stuff.